NPS© Emission

The Spectrum Distribution of audio frequencies applies in the NPS emission system with two different modalities that we may for simplicity refer to as Horizontal Distribution and Vertical Distribution.

PREMISE

Soundstage:

The whole “acoustic space” that surrounds the listener, mainly coming from the frontal direction. All volume perceived as occupied by acoustic sources, virtual (as reflected ones) and phantom (that exist only as listener’s perception) , as in the central image created with stereo fed with a monophonic signal as the perception of non-existent sources recreated by complex psychoacoustic phenomena.

– While listening live the “prevailing” sources are the real ones.

– While listening to a stereo or Dolby Surround system the prevailing sources are the virtual and phantom ones.

The reproduced and perceived acoustic scene varies with every change in the trasduction system type, form and dimensions, its emission peculiarities, impulse response, listening of real or reproduced sounds experience of the listener himself and his phisic/psycologic condition during each listening session.

Normally we accept that the acoustic scene could be characterized by an amplitude (more or less stable), an height (often highly uncertain) and a depth, inside of which the different acoustic planes can be more or less distinguishable.

THE HORIZONTAL NPS

In every stereophonic system the maximum amplitude of the reconstructed virtual scene is coincident with the speakers separation.

In every listening position the perceived acoustic signal is the sum of the direct field value in that position (received directly from the speaker) and the reverberant field value, made of all the environmental reflections and sound tails.

When listening to musical signal in normal living rooms, the reverberant field value is greatly dominant on the direct one’s at low frequencies, while at high frequencies it happens to be exactly the contrary.

THE PROBLEM

If the listener is equidistant from the two stereo speakers and these are generating equal spectra signals, he will perceive a single phantom source in the center position between the two speakers. When the listener cannot stay exactly on the simmetry axis of the stereo system every shift of his position will cause an increase of the level perceived by the now nearer speaker and less level from the other. The most important thing to be observed is that, in terms of global perceived level, due to the reverberant field existence, if the position shift happens to be within a normal listening area, in a domestic environment, the level variations will be mostly confined to frequencies higher than 1000/2000 Hz;

the level variations that will eventually appear at lower frequencies can be + or – depending on the first reflections and standing waves particular to that room and we would not take them in account for localization cues. So, an asymmetric listening with traditional speakers having constant or very large directivity patterns will ever be affected by two kind of distorsions:

– Perspective, due to phantom sources shift towards the nearer speaker.

This happens for all acoustic sources except for those generated by only-left or only-right signals. The apparent width of the acoustic scene doesn’t vary, but deforms itself with a compression from one side and a rarefaction at the other.

Virtual acoustic sources shift with the displacement of the listening position (after Bauer).

– Tonal, due to the mid and high frequency level decrease perceived from the farther speaker together with the same frequencies increase from the nearer one.

THE SOLUTION

Stevens & Newman in a classic experiment demonstrated that in order to localise sound sources in space, our auditory system uses temporal data as the intensity information. The phantom image created when two real sources are emitting signals with equal spectra, will be those whose sound arrives with less delay and level differences at the listener’s ears.

But the experiment defined also that, for frequencies less than 1.500 Hz, time differences are the most important ones, while for frequencies higher than 3/4.000 Hz intensity difference are preferred.

Remembering what has already been written about direct and reverberant sound field characteristics in domestic listening environments and knowing that low frequency and reverberant fields cannot provide directional information, it seems evident that a stereo system designed to offer correct localization cues based on intensity variations in the high frequencies could be the right one to adopt. So, according to the horizontal NPS, in order to overcome perspective and tonal distortions due to asymmetric listening position, we must observe the following two phrases:

1) In normal listening rooms the phantom image localization offered by a stereo system is related to the intensity differences between the two channels at frequencies higher than 1.000/2.000 Hz.

2) The system must also compensate for perspective distortions with frequency dependent level changes that could obtain global tonal invariance on the whole listening area.

The NPS-1000 Insignis loudspeaker system answers exactly the necessary horizontal emission lobes orientation (30°) for a listening distance that equals 1,5 times the inter-speakers separation. The final result is a system whose emission is correctly oriented and properly decreasing with frequency with a carefully pre determined pattern. By the choosing to distribute this way the horizontal spectra in function of the emission angle we obtain the following advantages:

1) Possibility to correctly perceive all the different positions of virtual and phantom sources from every listening position;

2) Any source’s correct tonal information from every listening position.

VERTICAL NPS

In the NPS vertical working, the acoustic scene vertical expansion and the very important auto-dimensioning effect of each virtual source, related to the real sources frequency spectrum, are obtained with each system’s transducer group having a vertical dimension well related to the central frequency band of each spectrum band that it has to reproduce.

The vertical center of each band emission zone (phisically formed by the system way and transducers groups) are placed at very similar heights with respect to the floor.

The NPS-1000 Insignis system has a vertical dimension that is much more dominant with respect to the other two dimensions and the transducers are spaced at very large distances from each other. The basic consideration for this choice is related to our auditory systems discrimination capability with the vertical perceiving angle (Rodgers).

The natural acoustic sources are placed in a real three-dimensional space and have a three dimensionality themselves. Our auditory system can separate all the different sound signals arriving from all directions equally in the horizontal as in the vertical plane and thanks to this capability can better select the signal to which it wants to give more attention from the other background noises (as we speak and listen in the confusion of a very crowded room, “Cocktail Party Effect”).

When we listen to a single emission point system, this sound intensity vector operation is no longer possible. Distributing many vertical emission zones with different dimensions on different altitudes we offer our auditory system the opportunity to select the different acoustic signals as for spectra as for receiving vertical angle differences. No doubt that this listening condition is much more realistic compared to that wich reduces all the natural dimensions to the center of one only (as for an ideal pulsating sphere).

In choosing to vertically distribute the acoustic spectra in function of the receiving angle we derive the following advantages:

1) Possibility to easily resolve the complex programs into their elementary signals;

2) Assignement of a realistic vertical dimension to the acoustic scene

3) Auto-dimensioning of emission zones that is very congruent with real acoustics source characteristics.

From our choice of distributing in this way the audio spectrum both in the horizontal and vertical plane we have the advantage of realising a reconstructed scene of three-dimensionality and stability rendering the loudspeaker system virtually invisible, offering the listener the sensation of almost participating to the musical event.

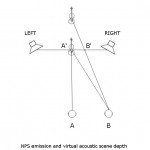

THE NPS ACOUSTIC SCENE DEEPNESS CONTROL

The acoustic images whose perspective position that the NPS system corrects are the ones that contain mid and high frequencies.

The perspective correction is more or less important depending on their weight in relation to the total signal global spectrum.

In a live performance the more distant real acoustic sources surely have a poorer high frequency spectrum than the nearer ones, those that NPS will correct and re-center more.

If we draw a typical listening condition in which one violin is centered at 3 m from us and a second one is behind the first at a 10 meter distance, from the center of your listening area it will prove very difficult to perceive the difference in distance between the two violins, as the only information you can rely on are the spectra and level differences, that could hypothetically derive from different instruments also being differently played. But now, if you move towards one side, due to the NPS perspective the nearer violin (whose spectrum is richer in the frequencies that NPS uses for its horizontal working) will remain centred, while the farther will shift in the same direction of your lateral movement. This is the added NPS information of your auditory system easily enabling you to reconstruct a more natural perception of depth.

THE FILTER EFFECT

Usually the sensation that we experience listening to a live performance, compared to a reproduced one, is ever similar as we take away a pair of eyeglasses. Surely our image perception will be less precise, but the perception is as before between us and the external world we have simply taken away a kind of filter that is ever reducing our ability to perceive the natural three dimensionality and the absolute independence of any scene’s detail from that of the background.

Imagine now of looking at the external world through a large window.

Even if it would be trasparent and very clean, our perception will never be the same as if the glass doesn’t exist.

Put now between us and what we are looking at a coloured glass.

Whatever the colour and its intensity will be, its tone will algebrically sum to each scenes particular object, changing all of them in the same direction, adding to them the same light frequency transfer function.

And this natural light spectrum alteration enables us to easily perceive the filter’s presence, as we don’t know exactly the original colours of each object.

The same thing happens when an acoustic scene is filtered by a unique transducer. Its frequency response alters the acoustic spectra of all the reproduced souces in the same way, letting us to perceive the speakers presence between us and the real sound.

How can we reduce and limit this phenomena?

Simply using one different transducer for every scene element.

So, the system that can help to reduce the “filter effect” is precisely the NPS-1000 Insignis, with which every different instrument, characterized by a different spectrum, is reproduced to very high percentage by different groups of transducers.

– A mono-way system, from this point of view is the worst possible.

– A multi-way system improves with the number of ways into which the acoustic spectrum is devided.

THE COCKTAIL PARTY EFFECT

The cocktail party effect is an interesting phenomena that tells us a lot about how our attention can effect how perceptual stimuli are processed. During a conversation at a party, where there are a lot of other conversations occurring, and music nearby, we somehow manage to tune into the voice of the person that we are talking to. All of the other noise is filtered out and largely ignored. This generally happens in all perception: some of the stimulus is filtered out for conscious analysis. This enables us to filter out the rest of the conversation at a party and concentrate on only one person’s voice. The ‘figure-ground’ phenomenon is the separation of the auditory input into the components of figure (the attended signal) and ground (everything else, in the background). However, an interesting point is that if someone over the other side of the room suddenly sees us and calls out our name, we generally notice quite quickly. This suggests that some processing of the other information does occur, enough to often enable to pick up on bits of it in certain situations, for example if it is a familiar voice.

Cherry (1953) discovered that it is based upon the characteristics of speech that we are attending to, and its differences from other sounds that are present. In the case of separating one voice from many in a room, the ability to do this depends on characteristics of the speech that in turn depend on the gender of the speaker, the intensity of the voice and the location of the speaker. Cherry discovered that if a subject is presented different messages in each ear through a pair of headphones, at the same time, if the voice that is used is the same then there is much more difficulty in separating the two messages on the basis of their meaning alone, which is the only cue left. Cherry also discovered that, if one of the messages that the subject was hearing was shadowed, that is, the subject had to repeat what was said in one of the messages out loud, then information from the other message was very rarely extracted. In fact, even when the unattended message was changed somehow, such as changing to a foreign language or being reversed, subjects very rarely noticed. However, if the unattended signal had a non-speech sound suddenly added to it, subjects almost always noticed (after Eysenck and Keane, 1994). This explains how we can detect a sound such as tyres screeching when we cross the road, as explained on the timbre page (contrast with previous sounds).